When you are dealing with millions of events per day (Json format). You need a debugging tool to deal with events that do no behave as expected.

When you are dealing with millions of events per day (Json format). You need a debugging tool to deal with events that do no behave as expected.

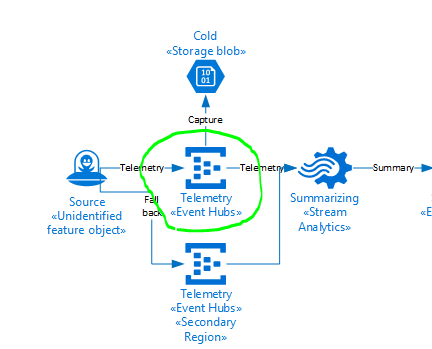

Recently we had an issue where an Azure Streaming analytics job was in a degraded state. A colleague eventually found the issue to be the output of the Azure Streaming Analytics Job.

The error message was very misleading.

[11:36:35] Source 'EventHub' had 76 occurrences of kind 'InputDeserializerError.TypeConversionError' between processing times '2020-03-24T00:31:36.1109029Z' and '2020-03-24T00:36:35.9676583Z'. Could not deserialize the input event(s) from resource 'Partition: [11], Offset: [86672449297304], SequenceNumber: [137530194]' as Json. Some possible reasons: 1) Malformed events 2) Input source configured with incorrect serialization format\r\n" The source of the issue was CosmosDB, we need to increase the RU’s. However the error seemed to indicate a serialization issue.

The source of the issue was CosmosDB, we need to increase the RU’s. However the error seemed to indicate a serialization issue.

We developed a tool that could subscribe to events at exactly the same time of the error, using the sequence number and partition.

We also wanted to be able to use the tool for a large number of events +- 1 Million per hour.

Please click link to the EventHub .Net client. This tool is optimised to use as little memory as possible and leverage asynchronous file writes for the an optimal event subscription experience (Console app of course).

Have purposely avoided the newton soft library for the final file write to improve the performance.

The output will be a json array of events.

The next time you need to be able to subscribe to event hubs to diagnose an issue with a particular event, I would recommend using this tool to get the events you are interested in analysing.

Thank you.

You must be logged in to post a comment.